Slow websites get skipped by AI search engines because those tools have limited time to spend on each page. If your site takes more than a second or two to respond, an AI crawler may index it incompletely or deprioritize it as a source. In a B2B context where AI tools increasingly influence which vendors get discovered, site speed is no longer just a conversion optimization - it directly affects whether your business appears in AI-generated answers at all.

How AI Crawlers Handle Slow Sites

Traditional search engine crawlers are patient. They queue pages, come back later, and index content gradually over time. AI answer tools work differently. They often need to retrieve and process content within a single session - and if a page doesn't respond quickly, they move on.

The specific metric that matters most for AI crawlability is time to first byte (TTFB) - how quickly your server begins returning content after a request. A TTFB under 200ms means the crawler starts receiving useful data almost immediately. A TTFB over a second means the crawler is waiting, and that wait costs you indexing completeness. Speed also affects your Google Ads Quality Score, which means a slow site costs you on both the organic and paid fronts.

What "Slow" Actually Means in 2026

For AI search purposes, "slow" is roughly anything above a one-second full page load. For human visitors on mobile, the tolerance is similar. The benchmarks align:

- TTFB under 200ms: excellent for both humans and crawlers

- TTFB 200-500ms: acceptable, but room to improve

- TTFB above 500ms: likely causing incomplete AI indexing and increased bounce rates from human visitors

- Full page load above 3 seconds: significant risk of being deprioritized by AI tools and near-certain conversion loss from human visitors

The Content Visibility Problem

Speed isn't just about response time - it's about what's available when a crawler arrives. Many modern websites load their content dynamically using JavaScript. The initial HTML that a crawler receives is a shell; the actual text appears only after JavaScript executes and API calls complete.

AI crawlers often don't wait for JavaScript to execute. They read the initial HTML. If your most important content - your service descriptions, your case studies, your contact information - only appears after JavaScript runs, it may be invisible to AI tools even if a human visitor would eventually see it.

HatcherSoft recommends checking this directly: view the page source of your key pages (Ctrl+U in Chrome) and look for your main content. If it's not there in the raw HTML, it may not be reaching AI crawlers.

Common Speed Problems for B2B Sites

- Bloated CMS platforms. WordPress sites with many plugins often generate slow server responses and large page sizes. Every active plugin adds overhead to each page load.

- Unoptimized images. Large image files are one of the most common causes of slow load times. Modern formats (WebP, AVIF) reduce file size significantly without visible quality loss.

- Render-blocking resources. CSS and JavaScript files that must load before the page becomes visible delay the first contentful paint. Moving these to load asynchronously or deferring non-critical scripts can dramatically reduce perceived load time.

- No CDN. Serving all assets from a single server location means users far from that server experience slower loads. A content delivery network (CDN) serves assets from locations closer to each user.

- Database-heavy server rendering. Sites that execute database queries on every page load are inherently slower than sites that pre-render pages as static files.

The Architecture That Works

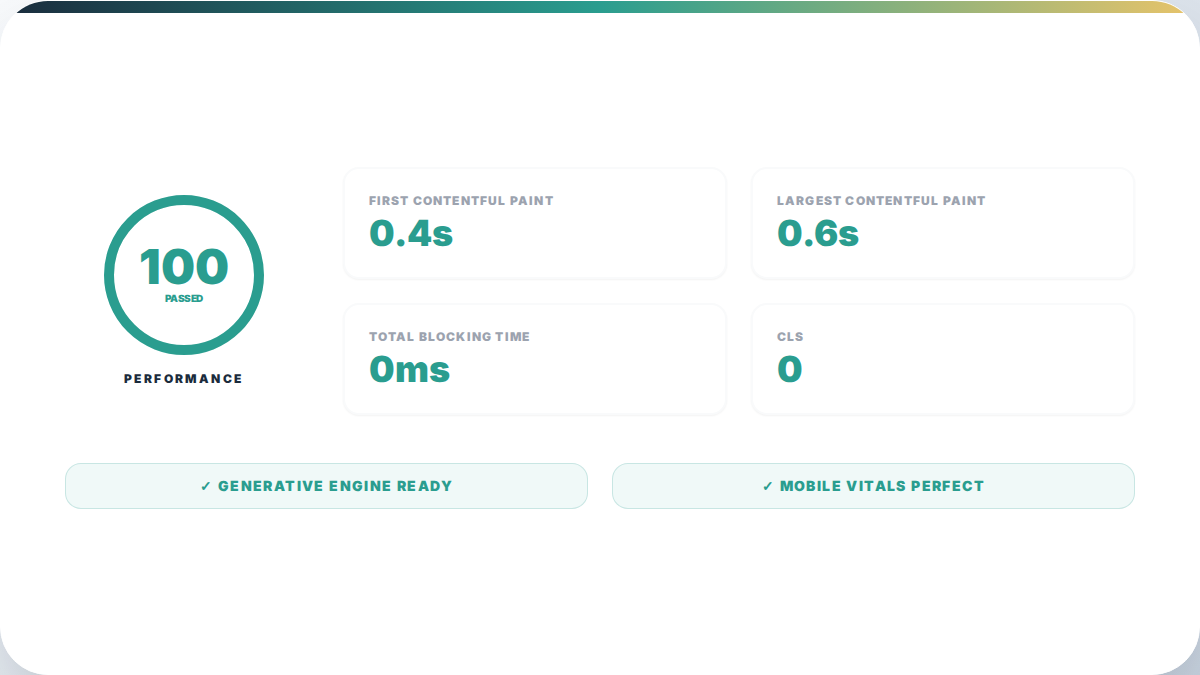

For B2B sites where speed and AI crawlability are priorities, the most reliable approach is static site generation served from a CDN. Pages are pre-built as HTML files, served instantly without server processing or database queries, and cached at edge locations worldwide.

This architecture:

- Delivers sub-200ms TTFB from most locations

- Serves complete HTML without JavaScript dependency

- Scales without additional server resources during traffic spikes

- Is cheaper to host than database-backed CMS platforms at equivalent traffic levels

The trade-off is that content updates require a build process rather than a simple CMS edit. For most B2B service businesses, this is an acceptable trade-off - content changes are infrequent enough that a short build process doesn't create meaningful delays.

Measuring Your Current Performance

Three tools give you the most useful picture of your current site speed:

- Google PageSpeed Insights: Tests your page from Google's servers and provides both mobile and desktop scores with specific recommendations.

- WebPageTest: Allows testing from multiple locations and shows a waterfall of exactly what loads in what order, making it easy to identify bottlenecks.

- Chrome DevTools Network tab: Shows the actual load sequence for each resource on your page and identifies which files are largest or take longest to retrieve.

A Lighthouse performance score above 90 on mobile is a good baseline target. Scores above 95 indicate a site that is performing well for both human visitors and AI crawlers.

Speed and Content Together

A fast site with thin content won't get cited. A content-rich site that's slow won't get fully indexed. The goal is both - a site that loads quickly, delivers its core content in the initial HTML, and has the depth and accuracy to be a useful source for AI search visibility. To check exactly where your site stands, running a full AI crawlability audit will surface the specific gaps.

HatcherSoft builds client sites on modern static site generators with CDN delivery and structured data included from the start. This isn't the only way to achieve these results, but it's the most reliable starting architecture for B2B businesses that want to be findable by both human visitors and the AI tools they're increasingly using to find vendors. If you want help getting there, our website service is where to start.